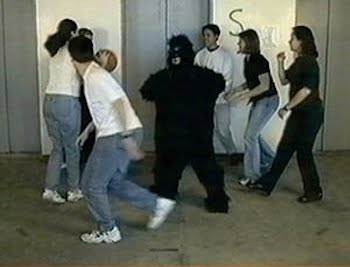

the invisible gorilla

I have just read an article about a famous experiment (that you can try for yourself here…) in which a large number of people focusing on counting ball passes on a video are completely unaware of someone in a gorilla suit walking on screen, beating its chest to camera, then walking off. This counter-intuitive result is used to show how blind we can be to what we’re not paying attention to.

This issue of attention is interesting enough, but something else occurred to me (actually, this occurs to me quite a lot *grin*): that human vision is nothing like a series of images caught on film or video – in which each frame is recorded with every detail that the camera is capable of recording. This analogy may seem an obvious one to make, but it is wholly false. We have the illusion that we are seeing a complete picture, but in fact what we see is much more analogous to the way in which we parse a sentence – a sequence of words that, together, when processed by our brain, conjure up a complete meaning. Let’s not go into what the exact equivalent of ‘words’ might be in what we are seeing – what is seems to me interesting is that we similarly construct the ‘meaning’ of what we’re seeing by assembling it from a few large pieces – and the pieces that we choose to build our ‘visual sentence’ from are determined by what we’re paying attention to. Thus, because we’re not paying attention to it, the gorilla simply is not one of the ‘pieces’ and so forms no part of what we ‘see’.

4 Replies to “the invisible gorilla”

Comments are closed.

There is another lesson to be learned here: how easy it is to direct and mandate our attention.

true

A lot of noise is filtered out by our brain. And when concentrating on a certain task, the noise-filter is amped up. It’s the same with audio… Noise is filtered out. Which is a good thing, otherwise you’d constantly hear the clock in your living room, the zooming of the computer ventilator, (the whining of the dog wanting to be let in the house 😉 )…

though I agree with you that we are able to ignore other stimuli than the ones we’re focused on, I disagree with your notion of what lies behind this. I don’t believe there is any ‘noise-filter’ that is ‘amped up’, on the contrary, the reason we do this is exactly because of how limited our ability to process sensory input is. The more resources we throw at something we’re focusing on, the less are available to dedicate to other things… thus, distractions are ‘filtered’ out by default… The ‘invisible gorilla’ is a perfect example of this…